Particle memory limitations and workarounds

18 September 2018 at 10:57 am

When coding in C/C++ it’s crucial to do memory management correct. For a device that is expected to “just work” you simply cannot have any memory leaks. The simple way to solve this is to never allocate memory dynamically. By just instantiating all objects statically, you can be guaranteed that you always have enough memory. What do you do when you past this?

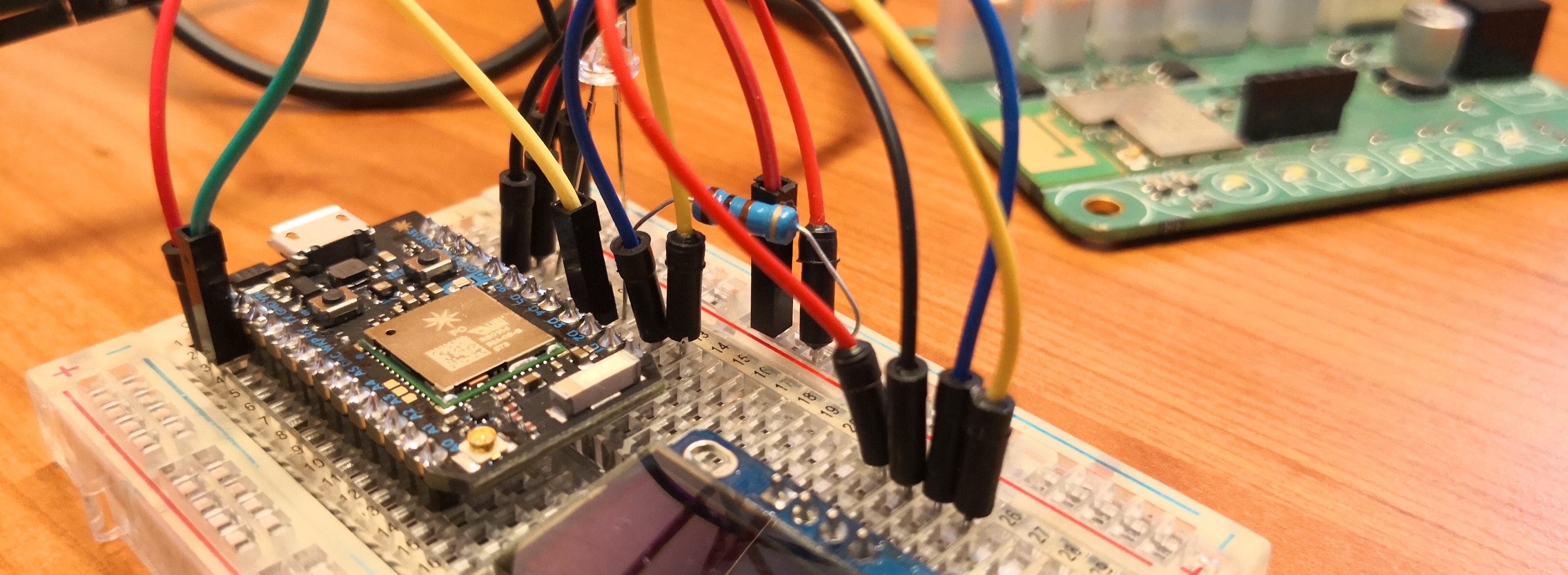

With the first Particle hardware platform called Spark Core, you had about 108Kb for your program and 35Kb of runtime memory available. With the Particle Photon, you have 128Kb program and about 60Kb runtime. This extra memory does however come with an undocumented limitation - for the Setup wifi feature (known as Listen Mode) to work, you have to leave at least 21.5Kb of the 60Kb free. You are free to use more, but if you do so the Listen Mode will not work correctly. Saving credentials will seem to work, but eventually fail.

If you’ve gotten to this, the trick is of course to start allocating memory dynamically instead of statically. That way you can utilise the same memory for multiple things. Jumping through some hoops and rethinking what needed to be in memory at what time, we found a fairly simple solution. We have a lot of debug features that take up valuable memory. Customers will normally not use these, so by making it all dynamic we suddenly had a lot of memory to spare. Here’s how the memory usage looked before and after:

Memory use: text data bss dec note 89884 2148 43360 135392 (before dynamic allocation) 89868 2148 33128 125144 (after dynamic allocation of a large buffer) 90012 2148 18468 110628 (Debug screens moved to RAM)

We basically halved the memory usage without sacrificing any features by just rethinking what we needed to load at what times in the program. I’m quite happy with how this turned out and I wanted to share in case others run into the same issue. Big shoutout the the great community and employees at Particle that helped solve this issue!